[ad_1]

Within multimedia and communication contexts, the human face serves as a dynamic medium capable of expressing emotions and fostering connections. AI-generated talking faces represent an advancement with potential implications across various domains. These include enhancing digital communication, improving accessibility for individuals with communicative impairments, revolutionizing education through AI tutoring, and offering therapeutic and social support in healthcare settings. This technology stands to enrich human-AI interactions and reshape diverse fields.

Numerous approaches have emerged for creating talking faces from audio, yet current techniques fall short of achieving the authenticity of natural speech. While lip synchronization accuracy has improved, expressive facial dynamics and lifelike nuances receive inadequate attention, resulting in rigid and unconvincing generated faces. Though some studies address realistic head motions, a significant disparity persists compared to human movement patterns. Also, generation efficiency is crucial for real-time applications, but computational demands hinder practicality. Bridging this gap requires optimized algorithms balancing high-quality synthesis and low-latency demands for interactive systems.

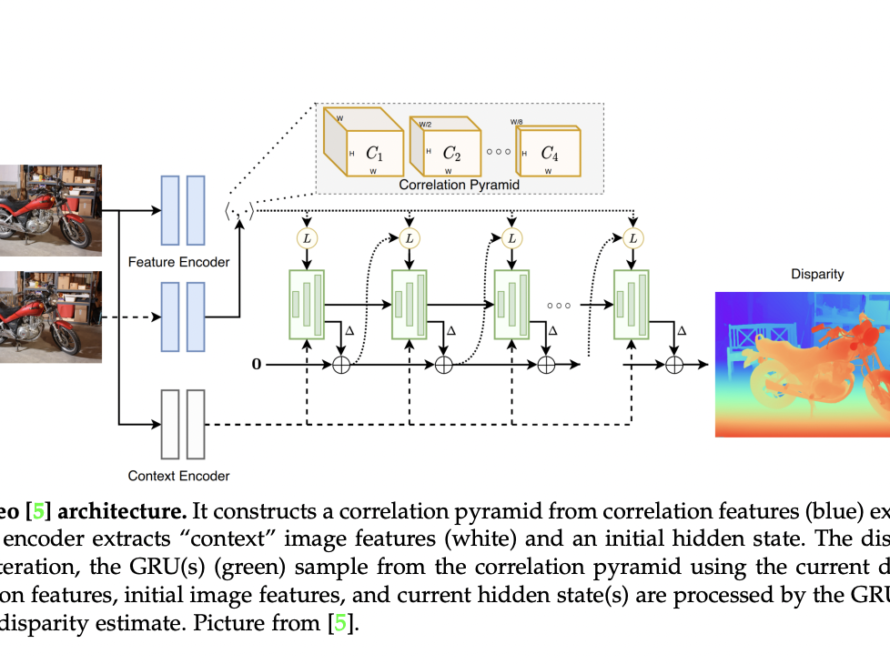

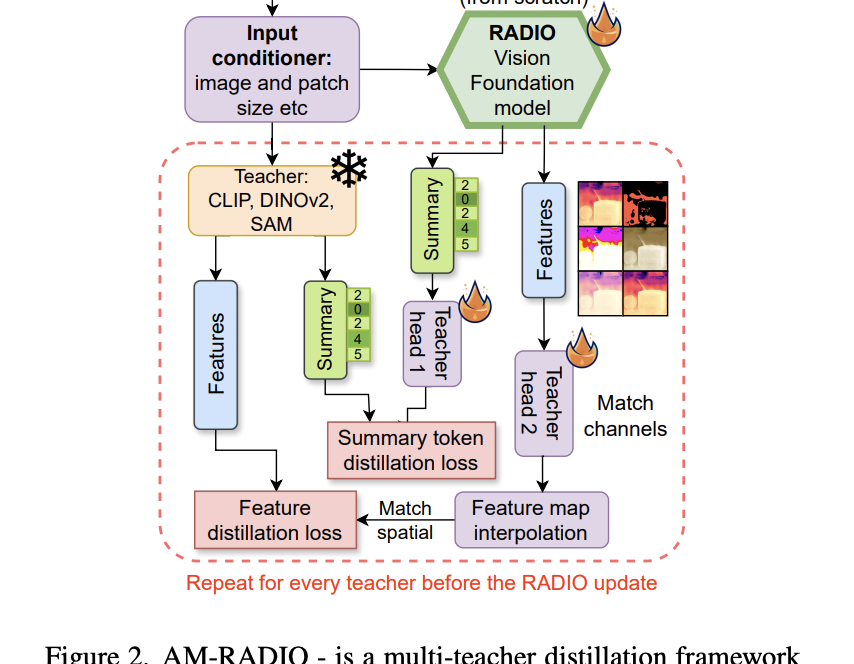

Microsoft researchers introduce VASA, a framework for generating lifelike talking faces endowed with appealing visual affective skills (VAS) from a static image and a speech audio clip. Their premier model, VASA-1, achieves precise lip synchronization and captures a broad range of facial nuances and natural head movements, enhancing authenticity and liveliness. Key innovations include a diffusion-based model for holistic facial dynamics and head movement generation within a face latent space, developed using expressive and disentangled face latent space from videos.

VASA aims to generate lifelike videos of a given face speaking with provided audio. It emphasizes clear image frames, precise lip sync, expressive facial dynamics, and natural head poses. Optional control signals guide generation. Holistic facial dynamics and head motion are generated in a latent space conditioned on audio. A face latent space is constructed, and diffusion transformers are utilized for motion generation. Conditioning signals like audio features and gaze direction enhance controllability. At inference, appearance and identity features are extracted, and motion sequences are generated to produce the final video.

The researchers compared LISA with existing audio-driven talking face generation techniques: MakeItTalk, Audio2Head, and SadTalker. Results demonstrate the superior performance of LISA across metrics on VoxCeleb2 and OneMin-32 benchmarks. Their method achieved higher audio-lip synchronization, superior pose alignment, and lower Frechet Video Distance (FVD), indicating higher quality and realism than existing methods and even real videos.

To sum up, Microsoft researchers present VASA-1. This audio-driven talking face generation model efficiently produces realistic lip synchronization, expressive facial dynamics, and natural head movements from a single image and audio input. It surpasses existing video quality and performance efficiency methods, showcasing promising visual affective skills in generated face videos. The key innovation lies in a holistic facial dynamics and head movement generation model operating within an expressive and disentangled face latent space. These advancements can transform human-human and human-AI interactions in communication, education, and healthcare.

Check out the Paper and Project. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter. Join our Telegram Channel, Discord Channel, and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 40k+ ML SubReddit

For Content Partnership, Please Fill Out This Form Here..

[ad_2]

Source link